neuro-symbolic AI technology

A unique patented technology that combines the safety of symbolic computing with the performance of deep learning

The platform developed by Yona Robotics is based on proprietary, patented symbolic AI technology—explainable mathematical models and rules—that can model the robot’s physical environment while ensuring operational safety.

Deep learning modules enrich this representation of the environment with semantic recognition modules and modules for identifying humans or specific objects related to certain applications, enabling the robot to interpret the scene in which it operates more accurately and make relevant decisions, thereby improving its performance.

These technologies have been developed over more than 10 years by the Inria Chroma team. They are protected by three patents, the exclusive rights to which have been granted to Yona Robotics.

Understanding Yona technology

Occupancy grids

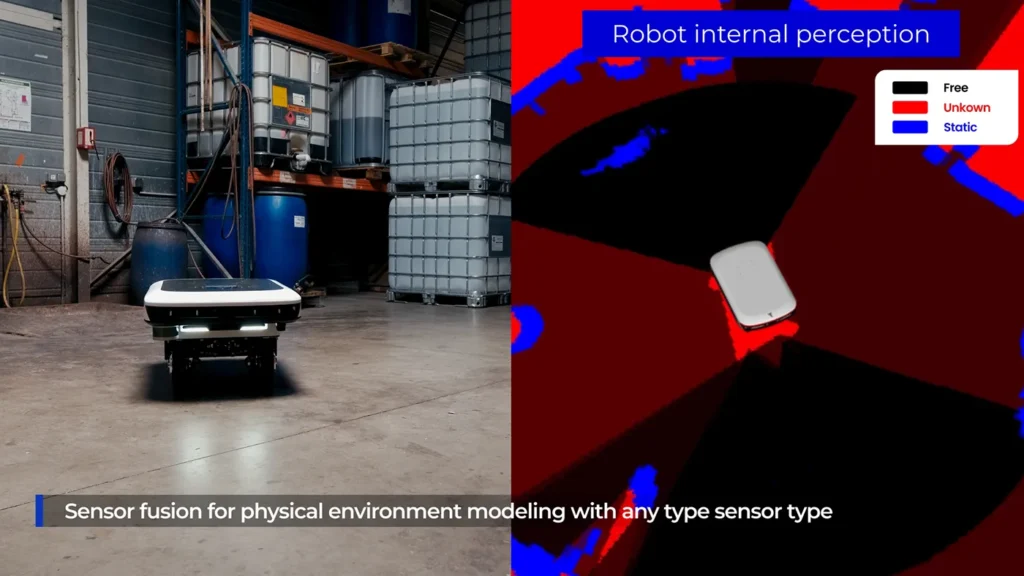

Yona Robotics’ technology builds and relies on probabilistic occupancy grids to represent the robot’s environment, using data from various sensors (lidar, radar, RGBD camera, US, TOF, etc.).

Using probabilistic models and a Bayesian approach, the data from each sensor is interpreted, the various uncertainties are quantified and integrated, then merged with the other sensors and previous data.

Based on the merged and filtered data, the robot constructs a detailed, unified, and dense representation of its immediate environment in elementary volumes (a few cubic centimeters). By reprojecting all of these volumes, a two-dimensional representation is obtained, in which each cell is associated with the probabilities of being in a possible state: the black color code represents the space that can be traversed by the robot; blue represents obstacles; and finally, red represents unknown spaces (no sensor data).

Semantic recognition and human identification modules

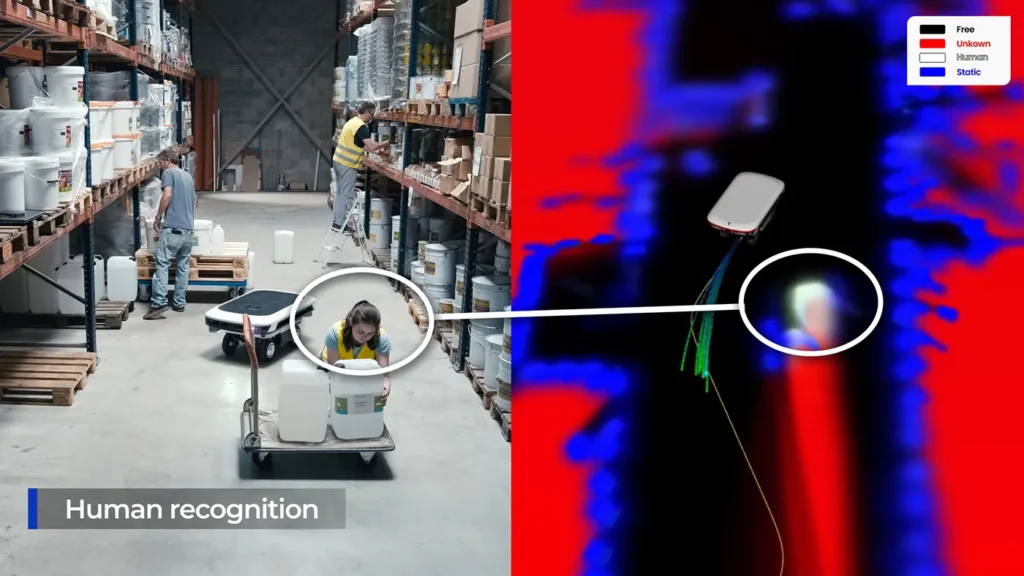

The states filtered in Yona-Robotics occupancy grids can be defined as desired, and specific states for each application, representing important objects or agents to be taken into account, can be directly integrated into them. For example, if people are likely to be present near the robot, semantic recognition modules based on deep neural networks can be deployed to provide a new source of information to the system, with this new data merged and filtered with that from other sensors. This addition of human recognition can enable the robot to adapt its behavior, thus ensuring safety and social acceptance, two key conditions for successful cohabitation. This is referred to as contextual navigation.

Semantic recognition and human identification modules

The states filtered in Yona-Robotics occupancy grids can be defined as desired, and specific states for each application, representing important objects or agents to be taken into account, can be directly integrated into them. For example, if people are likely to be present near the robot, semantic recognition modules based on deep neural networks can be deployed to provide a new source of information to the system, with this new data merged and filtered with that from other sensors. This addition of human recognition can enable the robot to adapt its behavior, thus ensuring safety and social acceptance, two key conditions for successful cohabitation. This is referred to as contextual navigation.

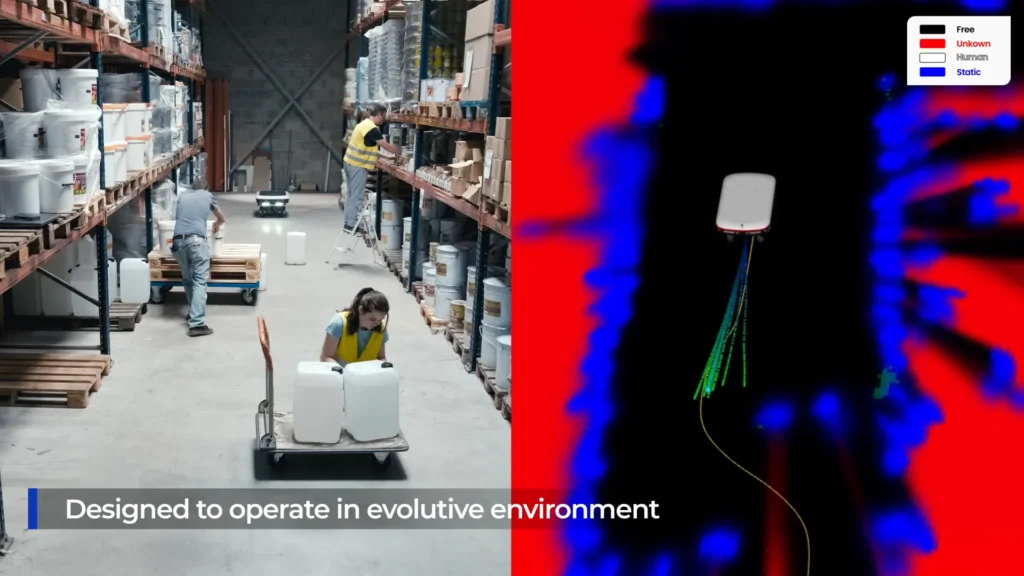

Navigation in changing environments

Thanks to Yona Robotics technology, robots can adapt to changing environments by deviating from predefined trajectories. The robot respects the final destination or waypoints imposed on it (usually by the fleet manager). Between these waypoints, it generates its own trajectories based on the environment it encounters, allowing it to optimize its travel time while avoiding obstacles. No prior knowledge of the environment is necessary.

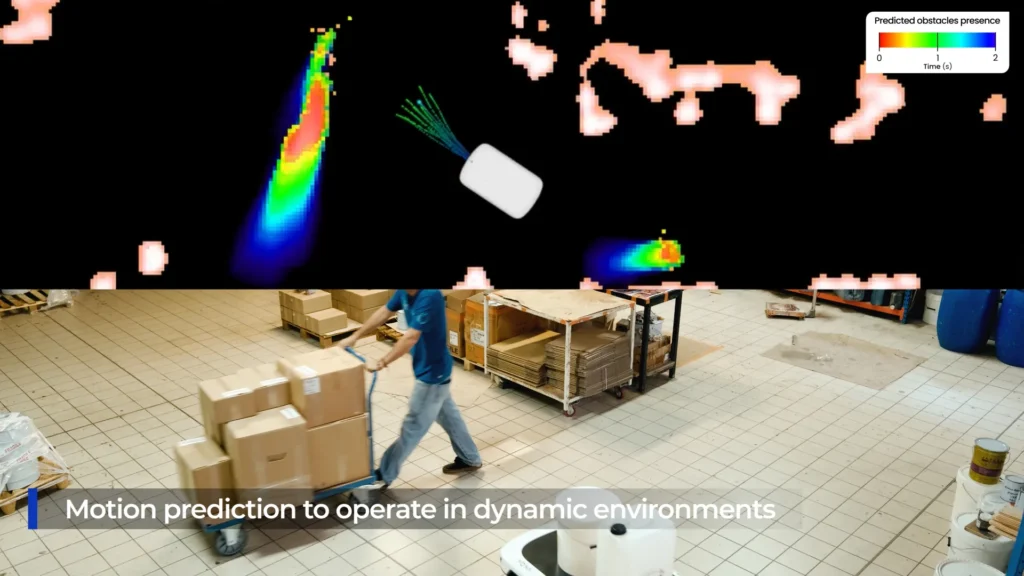

Predictive navigation in dynamic environments

By detecting and modeling dynamic objects, Yona Robotics’ technology is able to predict their future position and movement. The robot can then generate trajectories for itself that are adapted to this dynamic environment. This is known as predictive navigation.